We all know it, we still do it, although most people tend to be cautious of the setting where and who they appease, but it still happens and for the most there is no impact. For the mot there are no consequences. Yet in some cases there are, yet are we aware? Are the appeased parties aware? Because that side still matters, the appeaser and appeased are often, nearly always going from a place of innocence, or at least not knowing what will happen.

And today the BBC gives us one side. The article ‘Clearview AI fined in UK for illegally storing facial images’ (at https://www.bbc.co.uk/news/technology-61550776) has a side to it, one that most are eagerly or unknowingly ignoring.

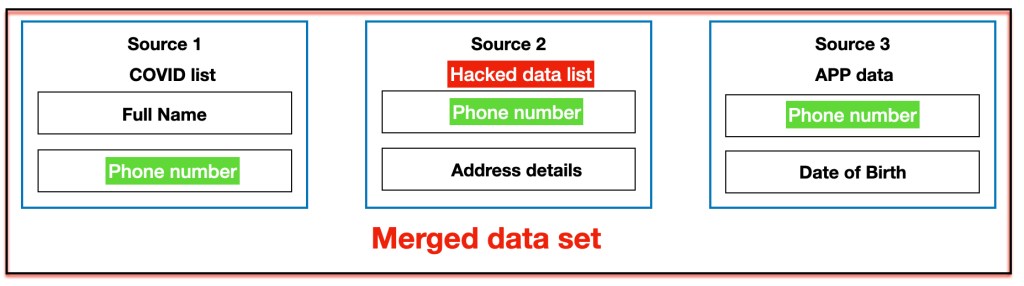

We see “Clearview AI takes publicly posted pictures from Facebook, Instagram and other sources, usually without the knowledge of the platform or any permission. John Edwards, UK Information Commissioner, said: “The company not only enables identification of those people, but effectively monitors their behaviour and offers it as a commercial service. That is unacceptable.”” My initial answer is ‘And?’ This is a foundation of Facebook, it is granular data analyses and lets face it, the images were given to the internet and “but effectively monitors their behaviour” is merely the next step. You see, there is a side that we want to ignore. There is the setting of ‘publicly posted pictures’, it therefor becomes PUBLIC DOMAIN (in some cases), granted, not in all cases and there we need to ask Meta whether THEIR rules were broken. And then we get the whopper “People expect that their personal information will be respected, regardless of where in the world their data is being used.” Where is that set in stone? I mean, really. Where is the law that states that this has to happen? And then we get the part that matters “When Italy fined the firm €20m (£16.9m) earlier this year, Clearview hit back, saying it did not operate in any way that laid it under the jurisdiction of the EU privacy law the GDPR. Could it argue the same in the UK, where it also has no operations, customers or headquarters?” And now we see the setting “it did not operate in any way that laid it under the jurisdiction of the EU privacy law the GDPR” I am not debating or opposing, I am asking. Because if that is the case, if that is true, then the actions against Clearview are close to pointless and lets be clear Russia and China might be doing EXACTLY the same thing. It was on the internet and this is not new. To see that, we need to go back to September 7th 2021 when I wrote ‘As banks cut corners’ (at https://lawlordtobe.com/2021/09/07/as-banks-cut-corners/) there it was banks versus organised crime and the image (see below) remains the same, but now it is set in a commercial stage with connected images to boot.

The BBC article is less than an hour old. I wrote about similar settings out in the open 8 months ago. So when we get John Edwards, UK Information Commissioner stating “The company not only enables identification of those people, but effectively monitors their behaviour and offers it as a commercial service. That is unacceptable.” Consider the word “unacceptable”, he does not state that it is illegal, interesting is it not? So exactly what are these fines? On what legal transgression are they based?

We see the data protection act parts when we are given:

use the information of people in the UK in a way that is fair and transparent

have a lawful reason for collecting people’s information

have a process in place to stop the data being retained indefinitely

meet the higher data protection standards required for biometric data

So what defines ‘fair and transparent’? I know what the words mean, but what do they mean here? Have a lawful reason? It is public domain, a collector has a perfectly valid reason, does he/she not? And when we get to the word indefinitely, we can set a stage of 100 years, because that is not indefinite, so where is the definition of indefinite given? As for biometric data, we accept that “physical characteristics — that can be used to identify individuals” there is however one side that is less clear. It is “used to identify individuals” what if the photo is not the identifying part, but the data is? I am merely stating a fact, most photo’s are not the greatest source of identification, for example (see below) how tall is Peter Dinklage? This photo will not give that away, will it?

And this data protection act only works for the UK, if the British people were photographed outside of the UK, the photo is out of consideration, is it not? Consider ‘people in the UK’, what if they were in Rome, Amsterdam or Brazil. How would that rule apply? All questions that come up and there might be for a lot of them rules that stop certain part, but not all parts and Clearview has 20,000,000,000 images. We would need to check them all and that will take a group of 20,000 people months, if not a whole year. So who pays for that part? All whilst there are parts that rely on Public Domain. It is a dangerous setting. I get it, it is dangerous and my part of the banks, merely makes things worse, makes the dat more complete and that is not merely banks. Consider the data Dunnhumby has, the data collectors, the panel creators. Dozens of data agencies and consider that several are outside the UK and EU, what happens when that data is combined? This mess is a whole lot worse than anyone considers and it was not due to big tech, it was due to greed driven people seeking new currencies and people are currency. I am not stating that Clearview is innocent, but they got here because the laws were lacking for decades. Now that the data sources are there, it is already too late. Whatever music John Edwards, UK Information Commissioner is playing, it suits his ego and the ego of his friends. For the people it is largely too late and it has been for a while, a setting I saw a long time ago and I illustrated it last September. I knew this because I used to do this and I was good, very good at doing this. So I leave you to wonder just how protected you are, because you are not, but you will learn that soon enough.