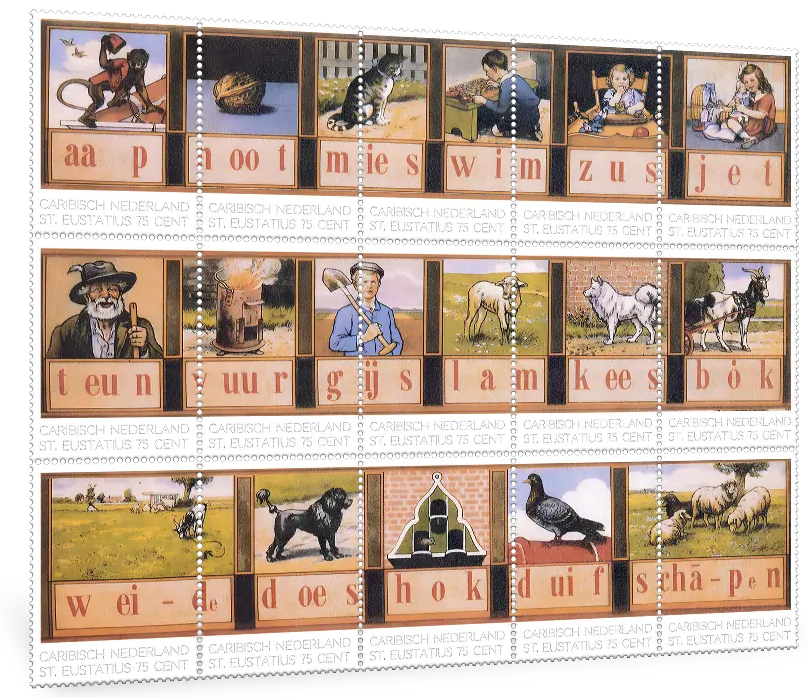

That is where I am at present. I have been knowing my brain around on designing games that can be used for reaching linguistic skills, but with a difference. As such I have been focusing on Latin, which was one of the languages that this solution would traverse, then from these we get to Italian and considering that people like Gaius Marius created the Italian army. There is a thought to create a few games (heavily based on MB games) to aid in the setting of this. So here I was (here I still am) converting the 1975 Tank Battle into a game with Chariots, the game play is almost the same and whilst they have no flags on the tanks they could gain a archer when they get tot he end. And the game is played by instructing (in latin) to move a chariot and as such you get linguistic training. Then we get to ‘Conquest of the Empire’ which is now called ‘securing the empire’ where we get to play a tactical game. These additions put the gamer in a linguistic mode, they will breath late in no time and no matter how limited this is and there were more games, Dice games were massively popular, so introducing them would aid this. Then there was a Dutch invention called ‘Leesplankje’ a Dutch 19th century invention that could aid here (and in other language models)

And the setting would be to use Latin phrases to aid in pronouncing certain Latin words. The idea is that these games would also be used to propel French, English, Italian, Portuguese, Japanese, Arabic and a few other languages. So even as the setting is based on other stages, the games could be introduced simultaneously, So here I am considering what games to add to that setting. So there is no need to add certain games, but several might be used in a different way.

Legality

Legality is on the forefront of my mind because this is based on IP that is not mine. I feel decently secure that a game like Tank Battle has its IP protection shedded as they are unlikely to continue the legal protections, there is however the foundational protection a game designer has and the question becomes how much do you need to change a game for it to no longer to have certain levels and it might not be seen as illegal. Making a 10 by 10 field into a 15 by 15 field and making tanks Chariots might be enough, but I am not certain. Also it is a game within a game/simulation. I reckon the law was not ready for that, because there is no physical version (yet) but that is a worry for another day and for now I say ‘currum unum promove’ and ‘Da huic currui sagittarium’ and worry about tomorrow things tomorrow, because first the idea is to advance certain settings and create the elements to give this beast mass and power, because that is what makes great IP in the end. The idea is nice and essential, but when it remains and idea that is the foundation of it, when we add substance to the idea it means we seemingly worked out certain settings and it pays me too, because my mind can concoct all the things in the universe, but I have seen time and time again that I can move at speeds that most quantum computers see as madness (caressing my ego here). Still the setting when completed will be decent and the idea is not to replace language teachers, but it is to offer these services to millions of people that are out of reach of such teachers and there is a rather nice stage that these teachers will embrace that setting to educate more students in languages than currently is possible and quite soon is no longer possible, because that is also the impact of global recessions. So whilst we get sources like ABC News claiming that “The global economy is currently avoiding a worldwide recession”, but the thought has become “is it though?” Because there are too many elements in play and something has to give and education is the usual first victim in an economical war and it will be trivialized through “We are keeping our finger on the pulse and there is going to be an adjustment soon” but they are talking in styles that is leaving at least one generation partially uneducated on a near global stage. And I saw this at least 2-3 years ago, because this setting of languages was seen be me at least that long ago and I saw the business sense that a player like Ubisoft could employ, they had at least 75% ready before they ever started and that is a nice setting for a software solution maker, because that is what this will become. I think I have worked on this from 2025 onwards, but the idea to advance certain matters to all languages is decently new.

Anyway, as we are told (13 minutes ago by the Guardian) that ‘Trump claims to be on verge of approving peace deal with major Iranian concessions’ I wonder how this was possible whilst we heard on March 15th 2026 that “Militarily, “we’ve essentially defeated Iran,” though he stopped just short of an official final victory declaration because he maintained the U.S. was still involved in delicate diplomatic talks”, yes, tell me another one. But all this is hitting the United States economically and even though it is only impacting 70 million students, the fact that the US debt surpasses their GDP is setting a strong need for a software solution and optionally not claiming a fake AI field and this is the benefit that Ubisoft could wield because there is a certain amount of certainty that the business branch of the world might not see an uneducated workforce as beneficial to them and for others to see a solution that takes away a workforce pressure in teachers that aren’t to be found gives a certain solace to the soul of these captains of industry.

So you tell me how exactly “the global economy is currently avoiding a worldwide recession” and I will show you where they are failing you because education and infrastructure are the first victims in a recession.

So you all have a great day, it Saturday here now and whilst Toronto is enjoying its Friday happy feelings, I can tell them that this is overrated, because I have already seen that.