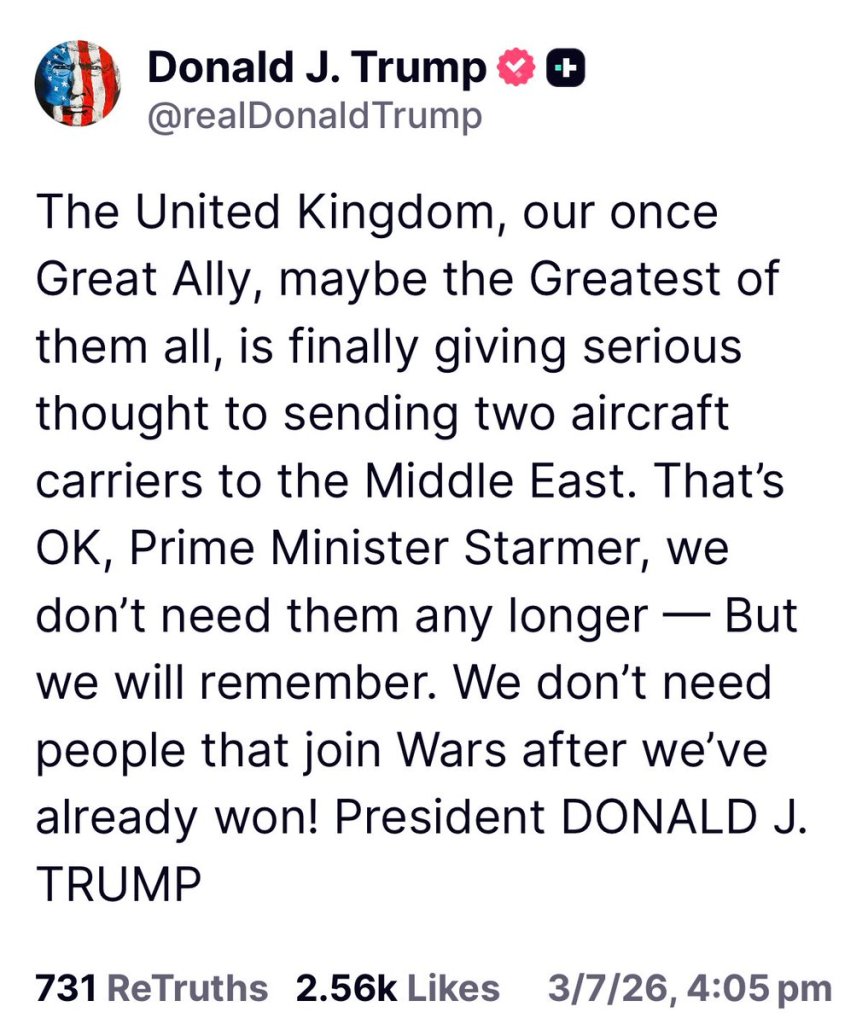

That is most of the time the setting, so as ABC gives us (at https://www.abc.net.au/news/2026-03-17/middle-east-live-updates-march-17-2026/106462358) “A tanker has been struck by an unknown projectile while anchored near the Strait of Hormuz. Earlier, US President Donald Trump turned his ire on European allies who he claimed “weren’t that enthusiastic” about helping the US secure the passage. The threat of Iranian missiles and drones targeting oil tankers in the strait has effectively closed the shipping channel, amid the country’s conflict with the US and Israel.” With the added ‘Rockets and drones fired at US Embassy in Baghdad’ an hour ago. Consider that President Trump gave us (on march 8th, Politico) ‘Trump says Starmer seeking to join Iran war ‘after we’ve already won’’ so, that was 9 days ago? What changed? Then yesterday, the Guardian gives us (at https://www.theguardian.com/world/live/2026/mar/16/iran-war-live-updates-news-oil-trump-hormuz-dubai-airport-israel-targets) “As Donald Trump expresses frustration with countries declining to send warships to reopen the strait of Hormuz, the response remains muted among those he directly called upon.” And this happened a mere 4 hours ago. Where are the vessels of the United States? Where are their minesweepers? Simple questions and it defies knowledge why this is not front and centre everywhere. So when the Sydney Morning Herald adds spice to the setting (at https://www.smh.com.au/world/north-america/with-10-damning-words-pete-hegseth-says-the-quiet-part-out-loud-20260314-p5oafr.html) with ‘With 10 damning words, Pete Hegseth says the quiet part out loud’ where we see “US Defence Secretary Pete Hegseth believes the media has not been sufficiently effusive about the success of the American military operation against Iran.

He had just finished speaking about the massive damage inflicted upon the regime in Tehran – its leadership, its missile stocks, its navy, its weapons infrastructure – when he turned his attention to the Pentagon press pack.” Now, I am willing to accept that I have not been part of any defence department for 43 years. I can assure you that a certain clarity is required in communication (from the defence side) and whilst I feel ready to blame the press on several matters, they are massively without blame here. The March 8th setting was the first damning setting. Then as I yesterday lighted on the ‘Just for fun’ setting that President Trump gave us and whilst the tactical setting that Kharg Island provides a sea port for the export of up to 90% of Iran’s oil products, as well as supplying storage for up to 30 million barrels. Bombing the hell out of it might have been essential, but it is a mere export point. There are 10 refineries doing the bidding of capturing oil and whilst I was able to device methods of stopping those settings, the clear message is to bomb those 10 locations to really put pressure on Iran. So when were they done? No, As I personally see it, President Trump what’s that oil this is the clear setting that is tactically seen and now that 2,500-5,000 boots are getting on the ground, that setting becomes the pressure point that Iran can put on the United States. So whilst I created IP to close harbours and disable trains, stopping the bulk of oil transits, it was merely one stage that Saudi Arabia, Qatar and the UAE could do to take pressure away from themselves and as such I gave Saudi Arabia and the UAE that IP. I did my thing to stop the war to go towards the gulf states.

So what are we now left with?

Well, the SMH also takes care of that. We are given “As former CNN Pentagon reporter Barbara Starr noted, it’s possible that Ellison will be none-too-pleased about Hegseth’s implications.

Starr, a 21-year veteran of the defence beat, pointed out on X that CNN has sent personnel to combat zones for decades, with some even losing their lives. “You have a legal and moral obligation to defend the free press, even the ones you don’t personally like,” she told Hegseth.

As a former TV presenter before he was tasked with running the world’s most powerful military, press freedom should be Hegseth’s instinct. His comments today – and his vainglorious move to banish press photographers from his briefings – suggest he sees the media more as a vassal to serve his interests.” I can get behind that thought. As such there are sides to this entire setting that aren’t reported on this enough. The first one was that no formal declaration of war was ever given by the United States. As such we were given: “the Trump administration officials have offered various and conflicting explanations for the war, such as to ward off an imminent Iranian threat, to pre-empt Iranian retaliation against US assets after an expected Israeli attack on Iran” My issue here is that the international courts in The Hague might side with Iran concerning the seemingly unprovoked attacks by Iran (I know that is hilarious), Iran has been waging proxy wars for decades and that is the power of a proxy war. I reckon that the attacks by Israel and the United States give a bitter taste in the eyes of the law. Israel is decently clear because of all the attacks by Iran via Hamas and Hezbollah, but the idea given “to ward off an imminent Iranian threat” is laughable. It is like New Zealand attacking Australia, the Sopwith Camel doesn’t have the range to cross that distance and as far as I know New Zealand does not have an aircraft carrier. The same applies to Iran. There is no way that an attack can result from Iran. Even Lone Wolf attacks are unlikely to succeed and the United States still has their boy-scout organisations (FBI, CIA, DIA) in place, as such they can either do their job or they cannot.

As such my speculative view was that the United States needed the oil that Iran has (for now). After failing to get to Canada’s rare earths (the 51st state attempt), Greenland resources (through failed annexation) and Venezuela oil (which is seem simply useless to the United States) the United States are now going for the Iranian oil. After that merely Russian oil remains (and Ukraine is doing something about that too) so what is left? I might be wrong in all this and there is a simple way to show me I am wrong. Merely bomb the 10 refineries. Several sources seemed to side with me on this as we are given ‘GOP Sen. Lindsey Graham Brags ‘We Are Going to Make a Ton of Money’ on Iran War’, which was given to us on March 9th. So as we were given “Graham seemingly suggested that the conflict with Iran as well as President Donald Trump’s abduction of Venezuelan President Nicolás Maduro aim to help the United States take control over major oil reserves. “Venezuela and Iran have 31% of the world’s oil reserves. We’re going to have a partnership with 31% of the known reserves. This is China’s nightmare. This is a good investment,” he said.” As well as ““We’re going to blow the hell out of these people,” Graham said, adding that “nobody will threaten [the U.S.] in the Strait of Hormuz again.” He also said there could be a collapse of Iran’s leadership. “This regime is in a death throe now, it is gonna be on its knees, it’s going to fall, and when it falls we’re going to have peace like no other time,” he added.” It seems that after 9 days he was proven on nearly all fronts and now that it is out in the open that the United States needs oil (because they have so little at present) there is now the setting that the United States are too broke to seemingly pay their bills and as I see it, the moment the boots come on the ground, the media will report on nearly everything and that will put team Trump/Hegseth in a new folly and in the limelight, Because if I can figure this out in the last decade and now we get that Dave Kelly (JP Morgan, as per OCT2025) can figure this out, you should wonder why others couldn’t figure this out. I get that I am a no one in all this, but David Kelly is the Chief Global Strategist and Head of the Global Market Insights Strategy Team of JP Morgan and he is a voice to consider no matter how you slice it.

So whilst we now get the Guardian (read: recently) give us “March 2026, Hegseth stated during a press briefing that US forces in Iran would show “no quarter, no mercy” to enemies. Analysts and Sen. Mark Kelly pointed out that a “no quarter” order—meaning to take no prisoners and kill them instead—is a direct violation of international law, specifically Article 23(d) of the 1907 Hague Convention IV.” All whilst media like the Conversation give us “Legal scholars have argued that Hegseth’s actions, particularly regarding the Venezuelan boat strikes and statements on the Iranian conflict, could expose him to investigations for violations of international and U.S. criminal law.” As such I reckon that both President Trump and Pete Hegseth fear the international courts. Iran optionally have a case here (I rely on optional as they have done plenty of bad things, among them attack Saudi Arabia without a formal declaration of war), so it makes sense that Pete Hegseth is in the stage that he wants to trivialize the international courts of law in the Hague, which is set through “The International Court of Justice, or colloquially the World Court, is the principal judicial organ of the United Nations (UN). It settles legal disputes submitted to it by states and provides advisory opinions on legal questions referred to it by other UN organs and specialized agencies. The ICJ is the only international court that adjudicates general disputes between countries, with its rulings and opinions serving as primary sources of international law. It is one of the six principal organs of the United Nations.” It was established in 1945 and it should now confuse all the readers on why António Guterres remains silent on this. It merely gives my thoughts on the United States being broke seeming validity. The person who attacks Israel at any option he gets, remained silent on too many settings we are seeing here. Even the rebuke on the settings of Pete Hegseth ‘attacking’ the international courts should have put him up in arms. There is the smallest notion that the media had not covered it, but I doubt that. As I see it, the seat that António Guterres hold is seen as one of the 100 most powerful seats in the world. It might not be as powerful as that uncomfortable seat that the pope has, but that would be a buttock conversation.

So I think I have given you something to think about and consider why the bulk of the refineries are left untouched, because that creates the wealth of Iran and isn’t that the superiority of any army? We are given “Sun Tzu’s The Art of War emphasizes that the supreme art of war is to subdue the enemy without fighting, making the destruction of an opponent’s economic base (or wealth of a nation) a superior strategy to direct physical conflict. Sun Tzu advises that a protracted war exhausts a state’s resources, dulls weapons, and dampens morale, meaning attacking an opponent’s economic ability to sustain a fight is crucial.” And I wrote about that on March 8th (and before that too, at https://lawlordtobe.com/2026/03/08/ones-creative-process/) the story ‘Ones creative process’ gave you the setting that the harbours and railway of Iran should be destroyed and I was happy to hand the IP that could set that in a certain view of certainty to both Saudi Arabia and the UAE. Because I am just that sort of guy. It is never about personal profit in some stage of war and these two countries were hammered with drones and missiles. As such I did more than talk (are you watching this Pete Hegseth), I delivered.

So you all have a great day and enjoy the day because Vancouver just joined us this Tuesday.