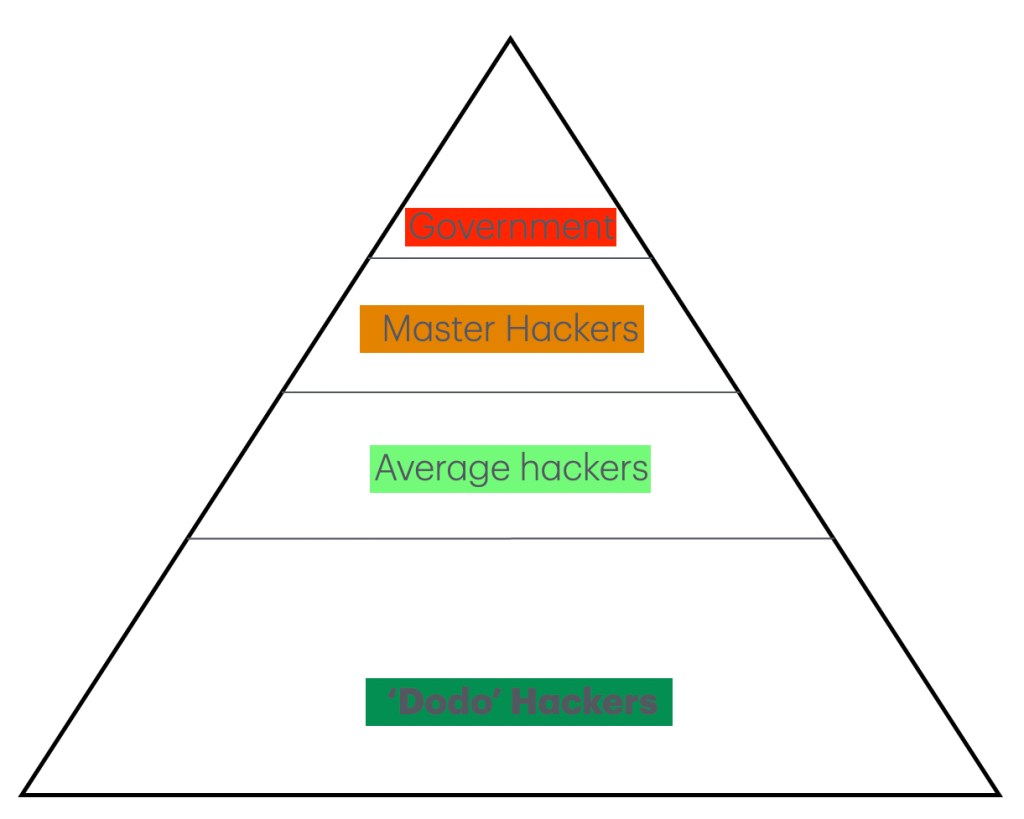

Yes, that is the setting and it is not some song by Kenny Loggins (1986) or Tom cruise playing rocket man with his F14 Tomcat (it wasn’t his, it was property of the US defense forces). The danger zone is real and Europe just opened it up. As I saw how EU countries are now rejecting Microsoft and Google on national scales, the setting changes. I get why you reject Microsoft and to some level grudgingly accept that Google will go that same way, the need for data sovereignty is almost crystal clear, especially in this US Administration. But the danger zone comes calling. You see, Google also owns Mandiant and as it is called a premier, technology-agnostic cybersecurity firm specializing in advanced threat intelligence, incident response, and managed defense with decades of experience, it was bought by Google in 2022, as such it will fall away from the nooks and crannies of office cyberspace. As such I wonder if anyone considered rereading their contracts and the danger zone they opened themselves up to. I have no idea what Microsoft has (and I kinda don’t care) but they will have something in place and when that all falls away, the EU and its settings is opening themselves up for a lot of cyber hassle. A massive redirection will be needed to avert the dangers they are opening themselves up to. I also reckon that every Tom, Dick, Harry and Seamus with more than 2 weeks of cyber knowledge will offer themselves as ‘cyber experts’ and that is likely going to increase the tensions and threat settings for corporations all over the EU. I reckon that (allegedly) Russian and Chinese cyber threats will be running rampant over the next 20 weeks, a cyber defense setting will become unavoidable. And if the EU doesn’t act fast, the costs will go into the millions per nation.

So even as we want to think that Google is the big evil (it really isn’t) the consequences of the CLOUD Act is one expensive hobby the United States never considered. As Europe (and soon the Commonwealth too) is deciding that their digital sovereignty is the way to go, we can see a direct implosion of the AI bubble, because as I see it, the United States has well over 4000 data centers and that much is not required for the 349 million people it has and at that point, as these data centers fall away, I reckon that the United States will drop these data centers as bad mortgages, most of them falling away because a population of one, is not much of a population to cater to in any data centre. In addition, any corporation who wants to stay in business will have to create a European business, taking revenue away from the USA to a much larger extent. They wanted a ‘cloud’ act and in 2018 they got it “The CLOUD Act (Clarifying Lawful Overseas Use of Data Act) is a 2018 U.S. federal law that dictates how technology companies respond to law enforcement requests for electronic data stored across international borders” and the bit of ‘electronic data stored across international borders’ will be costing them their heads soon enough and there is no turning back that clock, confidence in the United States is gone. So, whilst we are given “U.S. authorities can legally compel U.S.-based tech companies (like Google, Microsoft, or Meta) to hand over user data and communications, regardless of whether that data is stored in the U.S. or abroad” the danger is that this will also affect Amazon and optionally Oracle too. In case of Oracle there is doubt as it is a software vendor and they do not owe any data, but their cloud corporation will take a massive hit. To that I have no doubt. You see as a US corporation, Oracle’s global cloud environments can be legally compelled to hand over data to US authorities via mechanisms like the CLOUD Act. This puts European companies using standard global Oracle infrastructure at risk of violating local privacy laws, not to mention dangers to their data sovereignty. As expressions go, this means that the United States really pickled their jars. What is clear is that I looked into a Swedish completely isolated data centre 1-2 years ago and that firm is likely making massive revenue gains, because others called them nuts for doing what they did and I reckon they are close to the only vendor in town that is not hindered by US protocols.

An interesting phase, but the danger for cyber security remains. And Microsoft? They are about to lose the bulk of 451 million customers, so their footing is about to get shaky and for the cyber settings, whomever (non American) comes with a decent package will make a killing in Europe. I wonder who will fill that option?

What a nice setting to come to, so any gamer who wants to have his own No Man’s Sky universe with the data storage to keep a nation of gamers happy, it is likely that the USA will have some places for sale soon enough. Have a great day all.