This starts with a dream, I have done this before, but in this case the dream is not the real issue, it merely pointed me at the issue. In the dream I was in a weird firefight, I was shooting at something that looks like an Amsterdam canalboat, yet I was not in Amsterdam, the feeling I got is that this was in Germany, or perhaps Switzerland, I am not sure where it was, the boats were chasing me. They were chasing me over what seemed to be train bridges, a weird looking aqua-duct, and for the most I felt exhilarated, that was until I blew up the canal boat chasing me, at that point exhilaration changed into dread, a dee level of dread and I felt lost. It was at this point where I woke up, the dream made no sense.

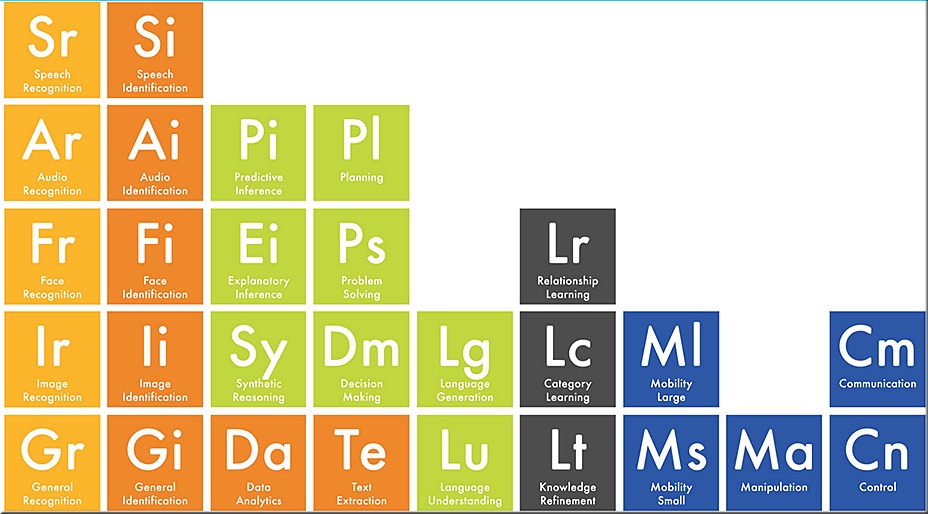

So as we close that part, we get to the next part and the first part will make sense later on. Consider what some claim is AI and what really is AI. The AI as Alan Turing saw it. In this I refer to the periodic table of AI, it shows how complex and evolved AI needs to be. In a simple form it is about Awareness, Perception, Recognition, Identification, Assessment and Proper response. This is merely the syntax reception, and the proper response. The right reaction to terms like ‘Yo Mama’, ‘Show me the money’, ‘X marks the spot’ and the setting goes on, the stage where the AI goes haywire as it never understood what was given to it. You see todays IT solutions that are laughingly called AI, are pure responding to the data and programming THEY HAVE. This is nothing new but as I was pondering this, suddenly the dream made sense, it was not about killing (perhaps a little) it was about the sudden feel of dread and how to apply it to gaming.

If gaming needs to evolve, we need to consider another stage, an evolved stage where the player gets a lot more information. You see, the gamer (person) is like an advanced computer, so we need to create the sting of dread. We have numbed ourselves to the screen (display), but what if there was a second screen? What if we add something like Google Glasses to the equation? I set that in motion in the stage that could one day be Far Cry 7, but the application is a lot wider. What happens when the glasses are not a camera, but an HUD that reacts to the screen, what we see on the screen becomes the input for the glasses to be the HUD and basically we are already in the clear for that, one might state that stream games are better equiped for this than the consoles and PC’s are.

What if immersion is not merely the story, what if it becomes a larger stage of next generation games? When we find another way to add Image Identification, Data analytics, and add Knowledge Refinement and drive that through the Google glasses, we add a dimension to the game, it gives a larger stage towards immersion, the brain becomes much larger vested in the game and the game feels more real towards the player (however if it does not work it goes bad big time). When we game, we always know that we are gaming, because the brain can differentiate between the screen and what is around it, when we deprive it of that, the game becomes (optionally) a lot more immersive and therefor a lot more real to us.

This is where cloud gaming might become the next step in gaming. If we can offer more immersion, the brain will see the game as the only place we are and that takes some doing, yet in all this, there might be a side effect. Not that it is a bad one, but when we cannot tell the difference the stage of balance becomes unhinged and I actually do not know what happens at that point, I reckon a psychiatrist might be the person the game developer needs to talk to.