It happens, we all are late to a party at times, I am no exception. I was busy looking at the stupidity of law, the stupidity of plaintiffs, waiting the courts, all whilst the approach to common sense was kept at bay. As such I did not read the BBC article ‘MP Maria Miller wants AI ‘nudifying’ tool banned’ until today. The article (at https://www.bbc.com/news/technology-57996910) shows the stage of what is called ‘a nudifying tool’. We can argue that the tool does not exist, because AI does not exist. You see, what some call AI is nothing but deeper learning. Someone took a deeper learning approach and accepted and acknowledged the setting that ‘sex sells’ and used that to create a stage. It is a lot worse than it sounds. You see when we consider ‘Nudifier APK for Android Free Download’ and we consider the hundred of millions horny guys (of all ages) and we see a market, a market for organised crime to exploit as an injector of backdoors (no pun intended) and in this Maria Miller loses from stage one. You see the app gives us “allows you to pixelate parts of your photo so that the illusion that your body is naked is also there”, they know how it will be used, but this statement washes their hands. Nothing is ever for free and this free download comes at a price I reckon. And with “By the way, packed with decorative elements for the picture, for example, the magazine cover is also paid separately. And that’s $ 0.99, which is the same price as the app.” We see the setting where the maker is looking at the setting to become a multimillionaire by September 30th.

So when we get to see some Getty image of a despairing woman with the text “Currently nudification tools only work for creating naked women”, we wonder what on earth is going on, even as we also get ‘one developer acknowledged was sexist’, just one? How many developers were asked? It becomes a larger stage with “similar services remain on the market, many using the DeepNude source code, which was made publicly available by the original developers.While many often produce clumsy, sometimes laughable results, the new website uses a proprietary algorithm which one analyst described as “putting it years ahead of the competition”.” Is no one standing still at the one small part ‘a proprietary algorithm’? Proprietary algorithms are never handed over, this has a larger stage coming and even as we see the actions of Maria Miller, I wonder how far it will go, the moment someone attaches the word ‘art’ whilst not taking responsibility of the images used (the user does that), where will it end?

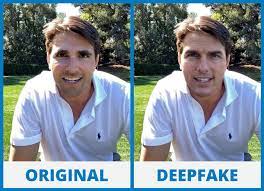

And I am right, the end of the article gives us “The goal is to find what kind of uses we can give to this technology within the legal and ethical [framework].” But in the meantime we will see hundreds or even thousands of senior high school girls see their images with a nudified version, all whilst the stage was known for the better part of 2 years. I reckon that within 5 years the glossy magazines will all have nudified versions of every celebrity, thats how the money flows and that is how the station will go. Is it right? Of course it is not, but the larger stage of ‘sex sells’ has been out there for decades and the law never did anything to stop it, yet some MP’s listened to silly old clerics and they merely attacked porn, now we see that the larger station is evolving and the involved parties are all wondering what to do next. And in this no one takes notice of ‘one developer acknowledged was sexist’, just the one? The UK has approximately 408.000 software development professionals. The US has almost 4 million developers. And we see that one developer who acknowledged it was sexist? I have not even included the EU and Asian developers. So in all this, I reckon we have a much larger problem, optionally the writer Jane Wakefield needs to take another look at the article. So whilst millions of 14-23 year old boys are looking to find DeepSukebe’s website, hoping to reveal a slightly more interesting view of Olivia Wilde, Laura Vandervoort, Leslie Bibb, Emma Watson, Paris Hilton and the cast of Baywatch? We need to consider that this was always going to happen, there are shady sides to deeper learning and whilst the enterprising and greed driven people are pushing for others to take a look, so are the members of organised crime, so are the enterprising people who considered an IT solution to push millions of paparazzi’s out of work. You really thing that some glossy magazine will ignore images when the people cannot tell whether it is real or fake? Consider the image below, as the technology becomes so good that we can no longer tell the difference in a face we have seen in dozens of movies, do you think we would be able to tell whether the boobies and shrubberies we never saw were real or deepfake? And when the images achieve 2400dpi, do you think the glossy magazines and gossip providers will ignore them when circulation and clicks grow?