Data has dangers and I think more by accident then intentional CBC exposed one (at https://www.cbc.ca/news/canada/british-columbia/whistle-buoy-brewing-ai-beer-robo-1.6755943) where we were given ‘This Vancouver Island brewery hopped onto ChatGPT for marketing material. Then it asked for a beer recipe’. You see, there is a massive issue, it has been around from the beginning of the event, but AI does not exist, it really does not. What marketing did to make easy money, the made a term and transformed it into something bankable. They were willing to betray Alan Turing at the drop of a hat, why not? The man was dead anyway and cash is king.

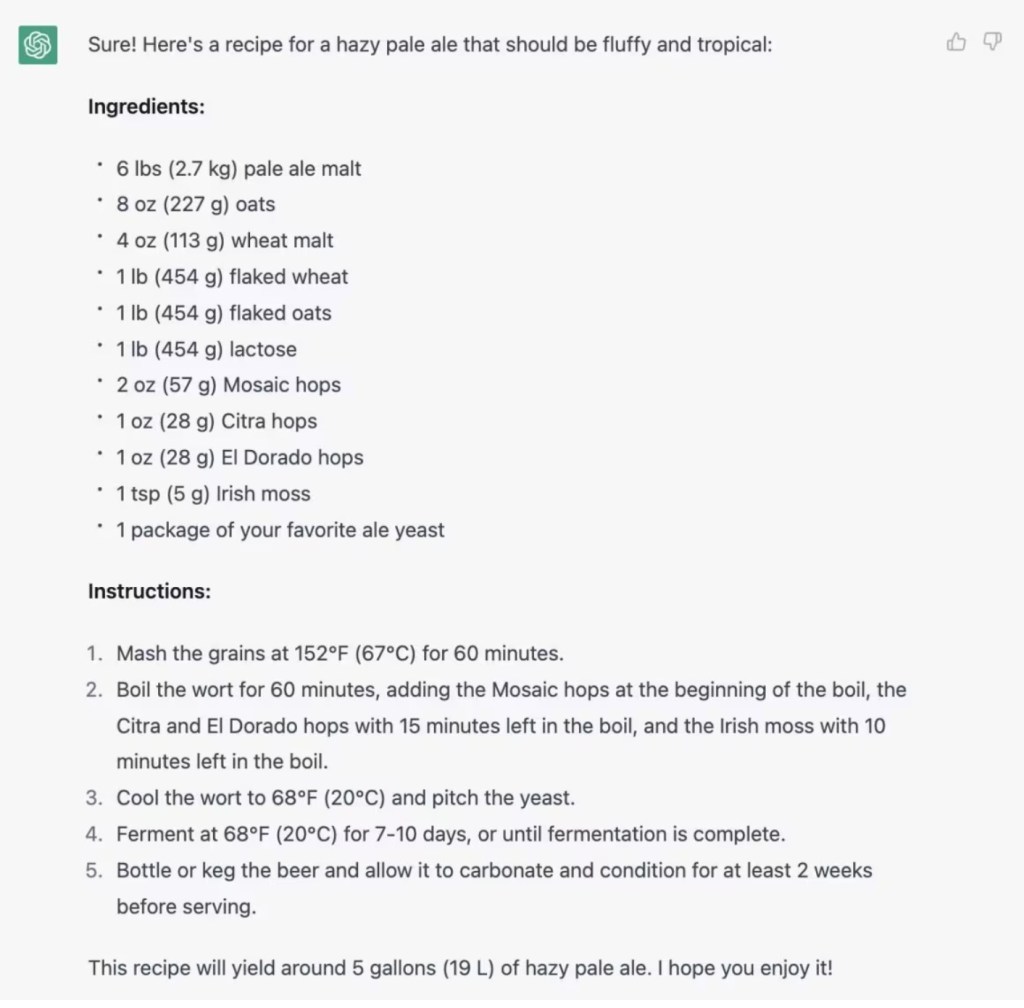

So they turned advanced machine learning and data repositories added a few items and they call it AI. Now we have a new show. And as CBC gives us “let’s see what happens if we ask it to give us a beer recipe,” he told CBC’s Rohit Joseph. They asked for a fluffy, tropical hazy pale ale” and we see the recipe below.

Now I have two simple questions. The first is is this a registered recipe, making this IP theft, or is this a random guess from established parameters, optionally making it worse. Random assignment of elements is dangerous on a few levels and it is not on the program to do this, but it is here so here you have it and it is a dangerous step to make. But I am more taken with option one, the program had THAT data somewhere. So in a setting we acquired classified data through clandestine needs and the program allowed for this, that is a direct danger. So what happens when that program gets to assess classified data? The skip between machine learning, deeper machine learning, data assessment and AI is a skip that is a lot wider than the grand canyon.

But there is another side, we see this with “CBC tech columnist and digital media expert Mohit Rajhans says while some people are hesitant about programs like ChatGPT, AI is already here, and it’s all around us. Health-care, finance, transportation and energy are just a few of the sectors using the technology in its programs” people are reacting to AI as it existed and it dos not, more important when ACTUAL AI is introduced, how will the people manage it then? And the added legal implications aren’t even considered at present. So what happens, when I improve the stage of a patent and make it an innovative patent? The beer example implies that this is possible and when patents are hijacked by innovative patents, what kind of a mess will we face then? It does not matter whether it is Microsoft with their ChatGPT or Google with their Bard, or was that the bard tales? There is a larger stage that is about to hit the shelves and we, the law and others are not ready for what some of the big tech are about to unleash on us. And no one is asking the real questions because there is no real documented stage of what constitutes a real AI and what rules are imposed on that. I reckon Alan Turing would be ashamed of what scientists are letting happen at this point. But that is merely my view on the matter.