This happens, sometimes it is within ones self that change is pushed, in other cases it is outside information or interference. In my case it is outside information. Now, let’s be clear. This is based on personal feelings, apart from the article not a lot is set in papers. But it is also in part my experience with data and thee is a hidden flaw. There is a lot of media that I do not trust and I have always been clear about that. So you might have issues with this article.

It all started when I saw yesterday’s article called ‘‘Risks posed by AI are real’: EU moves to beat the algorithms that ruin lives’ (at https://www.theguardian.com/technology/2022/aug/07/ai-eu-moves-to-beat-the-algorithms-that-ruin-lives). There we see: “David Heinemeier Hansson, a high-profile tech entrepreneur, lashed out at Apple’s newly launched credit card, calling it “sexist” for offering his wife a credit limit 20 times lower than his own.” In this my first question becomes ‘Based on what data?’ You see Apple is (in part) greed driven, as such if she has a credit history and a good credit score, she would get the same credit. But the article gives us nothing of that, it goes quickly towards “artificial intelligence – now widely used to make lending decisions – was to blame. “It does not matter what the intent of individual Apple reps are, it matters what THE ALGORITHM they’ve placed their complete faith in does. And what it does is discriminate. This is fucked up.”” You see, the very first issue is that AI does not (yet) exist. We might see all the people scream AI, but there is no such thing as AI, not yet. There is machine learning, there is deeper machine learning and they are AWESOME! But the algorithm is not AI, it is a human equation, made by people, supported by predictive analytics (another program in place) and that too is made by people. Lets be clear, this predictive analytics c an be as good as it is, but it relies on data it has access to. To give a simple example. In that same example in a place like Saudi Arabia, Scandinavians would be discriminated against as well, no matter what gender. The reason? The Saudi system will not have the data on Scandinavians compared to Saudi’s requesting the same options. It all requires data and that too is under scrutiny, especially in the era 1998-2015, too much data was missing on gender, race, religion and a few other matters. You might state that this is unfair, but remember, it comes from programs made by people addressing the needs of bosses in Fintech. So a lot will not add up ad whilst everyone screams AI, these bosses laugh, because there is no AI. And the sentence “While Apple and its underwriters Goldman Sachs were ultimately cleared by US regulators of violating fair lending rules last year, it rekindled a wider debate around AI use across public and private industries” does not help. What legal setting was in play? What was submitted to the court? What decided on “violating fair lending rules last year”? No one has any clear answers and they are not addressed in this article either. So when we get to “Part of the problem is that most AI models can only learn from historical data they have been fed, meaning they will learn which kind of customer has previously been lent to and which customers have been marked as unreliable. “There is a danger that they will be biased in terms of what a ‘good’ borrower looks like,” Kocianski said. “Notably, gender and ethnicity are often found to play a part in the AI’s decision-making processes based on the data it has been taught on: factors that are in no way relevant to a person’s ability to repay a loan.”” We have two defining problems. In the first, there is no AI. In the second “AI models can only learn from historical data they have been fed” I believe that there is a much bigger problem. There is a stage of predictive analytics, and there is a setting of (deeper) machine learning and they both need data, that part if correct, no data, no predictions. But how did I get there?

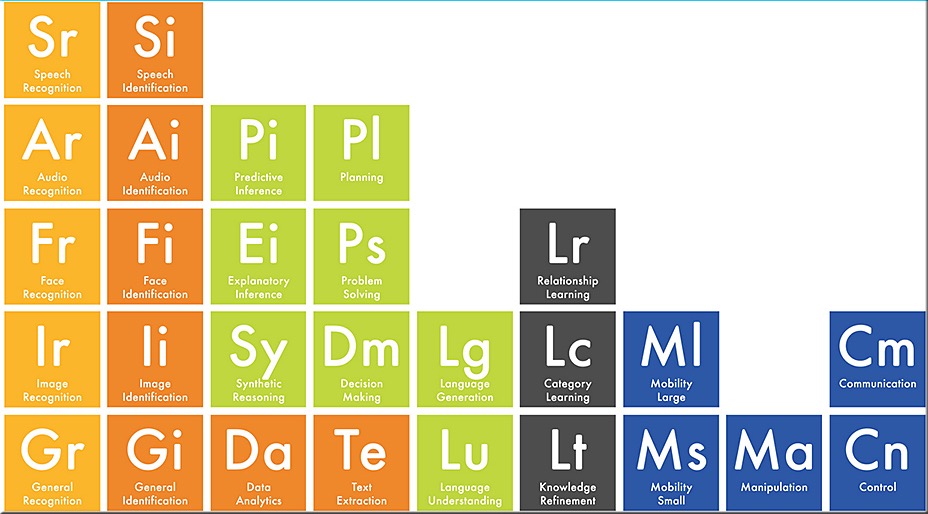

That is seen in the image above. I did not make it, I found it and it shows a lot more clearly what is in play. In most Fintech cases it is all about the Sage (funny moment). Predictive inference, Explanatory inference, and decision making. A lot of it is covered in machine learning, but it goes deeper. The black elements as well as control and manipulation (blue) are connected. You see an actual AI can combine predictive analytics and extrapolation, and do that for each category (races, gender, religion) all elements that make the setting, but data is still a part of that trajectory and until shallow circuits are more perfect than they are now (due to the Ypsilon particle I believe). You see a Dutch physicist found the Ypsilon particle (if I word this correctly) it changes our binary system into something more. These particles can be nought, zero, one or both and that setting is not ready, it allows the interactions to a much better process that will lead to an actual AI, when the IBM quantum systems get these two parts in order they become true quantum behemoth and they are on track, but it is a decade away. It does not hurt to set a larger AI setting sooner rather than too late, but at present it is founded on a lot of faulty assumptions. And it might be me, but look around on all these people throwing AI around. What is actual AI? And perhaps it is also me, the image I showed you is optionally inaccurate and lacks certain parts, I accept that, but it drives me insane when we see more and more AI talk whilst it does not exist. I saw one decent example “For example, to master a relatively simple computer game, which could take an average person 15 minutes to learn, AI systems need up to 924 hours. As for adaptability, if just one rule is altered, the AI system has to learn the entire game from scratch” this time is not learning, it is basically staging EVERY MOVE in that game, like learning chess, we learn the rules, the so called AI will learn all 10(111) and 10(123) positions (including illegal moves) in Chess. A computer can remember them all, but if one move was incorrectly programmed (like the night), the program needs to relearn all the moves from start. When the Ypsilon particle and shallow circuits are added the equation changes a lot. But that time is not now, not for at least a decade (speculated time). So in all this the AI gets blamed for predictive analytics and machine learning and that is where the problem starts, the equation was never correct or fair and the human element in all this is ‘ignored’ because we see the label AI, but the programmer is part of the problem and that is a larger setting than we realise.

Merely my view on the setting.