Yup that is the statement that I am going for today. You see, at times we cannot tell one form the other, and the news is making it happen. OK, that seems rough but it is not, and in this particular case it is not an attack on the news or the media, as I see it they are suckered into this false sense of security, mainly because the tech hype creators are prat of the problem. As I personally see it, this came to light when I saw the BBC article ‘Facebook’s Instagram ‘failed self-harm responsibilities’’, the article (at https://www.bbc.com/news/technology-55004693) was released 9 hours ago and my blinkers went red when I noticed “This warning preceded distressing images that Facebook’s AI tools did not catch”, you see, there is no AI, it is a hype, a ruse a figment of greedy industrialists and to give you more than merely my point of view, let me introduce you to ‘AI Doesn’t Actually Exist Yet’ (at https://blogs.scientificamerican.com/observations/ai-doesnt-actually-exist-yet/). Here we see some parts written by Max Simkoff and Andy Mahdavi. Here we see “They highlight a problem facing any discussion about AI: Few people agree on what it is. Working in this space, we believe all such discussions are premature. In fact, artificial intelligence for business doesn’t really exist yet”, they also go with a paraphrased version of Mark Twain “reports of AI’s birth have been greatly exaggerated”, I gave my version in a few blogs before, the need for shallow circuits, the need for a powerful quantum computer, IBM have a few in development and they are far, but they are not there yet and that is merely the top of the cream, the icing on the cake. Yet these two give the goods in a more eloquent way than I ever did “Organisations are using processes that have existed for decades but have been carried out by people in longhand (such as entering information into books) or in spreadsheets. Now these same processes are being translated into code for machines to do. The machines are like player pianos, mindlessly executing actions they don’t understand”, and that is the crux, understanding and comprehension, it is required in an AI, that level of computing will not now exist, not for at least a decade. Then they give us “Some businesses today are using machine learning, though just a few. It involves a set of computational techniques that have come of age since the 2000s. With these tools, machines figure out how to improve their own results over time”, it is part of the AI, but merely part, and it seems that the wielders of the AI term are unwilling to learn, possibly because they can charge more, a setting we have never seen before, right? And after that we get “AI determines an optimal solution to a problem by using intelligence similar to that of a human being. In addition to looking for trends in data, it also takes in and combines information from other sources to come up with a logical answer”, which as I see is not wrong, but not entirely correct either (from my personal point of view), I see “an AI has the ability to correctly analyse, combine and weigh information, coming up with a logical or pragmatic solution towards the question asked”, this is important, the question asked is the larger problem, the human mind has this auto assumption mode, a computer does not, there is the old joke that an AI cannot weigh data as he does not own a scale. You think it is funny and it is, but it is the foundation of the issue. The fun part is that we saw this application by Stanley Kubrick in his version of Arthur C Clarke’s 2001: A Space Odyssey. It is the conflicting part that HAL-9000 had received, the crew was unaware of a larger stage of the process and when the stage of “resolve a conflict between his general mission to relay information accurately and orders specific to the mission requiring that he withhold from Bowman and Poole the true purpose of the mission”, which has the unfortunate part that Astronaut Poole goes the way of the Dodo. It matters because there are levels of data that we have yet to categorise and in this the AI becomes as useful as a shovel at sea. This coincides with my hero the Cheshire Cat ‘When is a billy club like a mallet?’, the AI cannot fathom it because he does not know the Cheshire Cat, the thoughts of Lewis Carrol and the less said to the AI about Alice Kingsleigh the better, yet that also gives us the part we need to see, dimensionality, weighing data from different sources and knowing the multi usage of a specific tool.

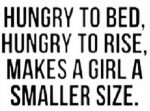

You see a tradie knows that a monkey wrench is optionally also useful as a hammer, an AI will not comprehend this, because the data is unlikely to be there, the AI programmer is lacking knowledge and skills and the optional metrics and size of the monkey wrench are missing. All elements that a true AI can adapt to, it can weight data, it can surmise additional data and it can aggregate and dimensionalise data, automation cannot and when you see this little side quest you start to consider “I don’t think the social media companies set up their platforms to be purveyors of dangerous, harmful content but we know that they are and so there’s a responsibility at that level for the tech companies to do what they can to make sure their platforms are as safe as is possible”, as I see it, this is only part of the problem, the larger issue is that there are no actions against the poster of the materials, that is where politics fall short. This is not about freedom of speech and freedom of expression. This is a stage where (optionally with intent) people are placed in danger and the law is falling short (and has been falling short for well over a decade), until that is resolved people like Molly Russell will just have to die. If that offends you? Good! Perhaps that makes you ready to start holding the right transgressors to account. Places like Facebook might not be innocent, yet they are not the real guilty parties here, are they? Tech companies can only do so such and that failing has been seen by plenty for a long time, so why is Molly Russel dead? Yet finding the posters of this material and making sure that they are publicly put to shame is a larger need, their mommy and daddy can cry ‘foul play’ all they like, but the other parents are still left with the grief of losing Molly. I think it is time we do something actual about it and stop wasting time blaming automation for something it is not. It is not an AI, automation is a useful tool, no one denies this, but it is not some life altering reality, it really is not.