I got a few messages on the previous article named ‘Perception is merely the start’, several readers had a hard time comprehending this, and off course it is my fault. Well, OK, I will accept that, yet I also assumed a few people being ahead of me in a few regards, so the fact I had to explain this was a little weird, but OK, fair enough. It seems that those in several industries were in the dark of a few items there, so here goes.

Perception

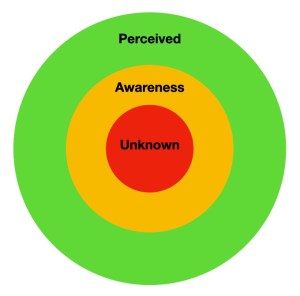

The perception circles are a stage where we go from what we perceive to what is unknown, in middle is what we are aware off. Some put that in a different order, yet perception is the larger circle. We perceive and within what we perceive (complete awareness), there is hat we are merely aware off (partial awareness) and the inner circle is what we do not know. People expect it is the other way round, but this is from niche to speciality. For example, we perceive a firearm, we are partially aware of the calibre, we are partially aware of ammunition, spare parts and cleaning kits of a firearm, yet the parts and specific spare parts of firearm is unknown to us. The same is applicable to games. We are aware of a type of game, we are partially aware of objects, scripting, optionally programming, yet we are in the dark of programming itself. And this repeats itself when we look at the larger approach of cloud gaming and optional other tools of gaming (like Google Glasses). We see the elements, but we do not see how they interact, not precisely.

Assessment

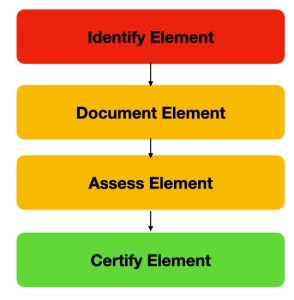

Then at some point I mention “In a simple form it is about Awareness, Perception, Recognition, Identification, Assessment and Proper response.” In the second graph, we see how identification and assessment goes, now we see that it does not go from the outside inwards, it goes from the unknown to the perceived. This might seem weird, but the brain goes the other direction, we auto label what we know until we are left with the unknown, but the assessment setting goes the other way, the brain merely discards all the steps according to what is known, that is the first issues we see in AI, I left it to linguistic sides, but the AI has a larger problem to identify, because it never learned to learn. Our brains got that from creation (and childhood), we learned to learn and that is our benefit, yet AI (what sales people call AI) relies on deeper learning and AI, when it crosses the unknown it is lost (until the programmer adds options as wide as possible), there is the larger setting where games fail. So we need to set a larger data pool and when we add additional signals we get a level of immersion, it is a data overload and the brain now takes over, it will use what it comprehends and relates to, we enter the game on a deeper level and it seemingly overtakes our sense of reality, because we are vested in THAT game, as the brain has less time for what is around it, we seemingly forget about it until we are yanked out of the game. An example is to see ourselves as a horse in traffic, we are aware of traffic as we have a wide perception, but now )as a horse) we are given blinkers. Their function is to limit vision “a piece of horse tack that prevent the horse seeing to the rear and, in some cases, to the side”, we can get that same effect with other means (like the Google Glasses), as the brain gets more info, it drops what is not relevant, as such the real world falls away. Now, it is important to realise that my model is imprecise (or incomplete). In the assessment stage there are levels of verification that we do automatically. Consider that you are walking and you see a sign stating a time (3:30), yet when you are closing in, you suddenly realise that it was 3:38, the brain verified what it saw again and again until there was clarity, we forget about these automated processes and that is where AI also fails, when it has the data, it is assumed to be correct and on point of what we require, yet when we grapple back the ‘Yo mama’ expression, the AI cannot tell when it is about your mother, a formal declaration of defeat, or a joke. It never comprehended what was real, the programmer never taught the AI and there are waves of missing data pointers. The part we are often given is linked to deeper learning and there we see a lot of good (really a lot). In this Saga Brigs wrote “You can’t search for something you’ve already found, can you? In the case of deeper learning, it appears we’ve been doing just that: aiming in the dark at a concept that’s right under our noses” and that is the problem, an actual AI has the wisdom as a situation approaches, our brain does that, it has that ability, the computer does not. As such it leaves a lot blank (optionally a lot to be desired), yet our brains pick up on a lot of that, hence my anger at Ubisoft and their embrace of mediocrity. Yet as I see it, if we give the brain MORE to deal with, like an HUD in Google Glasses, or something similar, that game changes, the blanks (as our brains see it) fall away, we get a lot more and the brain is now fully engaged, the effect, or immediate effect becomes that the game is seemingly a lot more immersive. So what we perceive increases by factor N, as such the game becomes (seemingly) a lot more rewarding to the player.

Validation

This now gets us to a model you will have seen in all kinds of versions before, it is validation and verification. Yet in this setting we see Verification (A), where we control what we see and we either confirm what we see or we let the brain think it is doing so (through a second display like the Google Glasses), as it does this it involves a larger stage to immersion, yet this alone will not do this, the other side it gives us Validation (B), it is a bird? (Superman), is it an enemy? (AC Origin), and that list goes on. On the other side it is where we are, where we go and the consideration that we are on the right track, in the middle is the neat stuff. It is the system, the deeper learning, or perhaps a better stage is the data we are given, yet there is an upside and a downside. The upside that if there is data, it will always be correct. Yet our brains have always been in a stage of checks and balances and if the test and the data is always 100% correct, the brain becomes less and less convinced and the model fails in a game. Checks and balances are missing too often and that is where it goes wrong, so if we give the brain more to do it takes longer for it to catch on, the immersion os more and more complete. And these three models are always active and always relating to one another in some form, so as the brain is given the specific item of some table, it shuts down in disbelieve, nature is never perfect and that is where the game goes wrong, the brain was no longer convinced. That is the setting where cloud gaming could become the next thing. We had the provide stage, we knew nothing (Atari 2600), we moved towards seek where we learned what was out there (Atari ST), we entered connect to what we were playing (Playstation 2+3) and now we enter the imprint stage where the game imprints its brand on our needs and desires (Playstation 4+5, Cloud) and this is where the cloud becomes (optionally) more.

All this was part of yesterday and the developers and IP people should have been on this page long before I put it out here today, so that is where we are now and that is where gaming can go in 2022-2023, will it? It depends on the stage of immersion they are banking on, I reckon that consoles will take longer because of the model of software, but cloud gaming (like Amazon and possibly Netflix) can go further, it will be about a lot more than merely the graphics and the story, I wonder if they are ready for that.