There is a setting, one that requires scrutiny and one that demands closer looks. You see, I do not completely agree with the setting that The Guardian gives us (at https://www.theguardian.com/technology/2026/feb/26/how-to-replace-amazon-google-x-meta-apple-alternatives) with the illustrious title ‘Leave big tech behind! How to replace Amazon, Google, X, Meta, Apple – and more’ the first big thing is that there is no mention of Microsoft in that title. So that is the very first thing that comes to mind. Especially as CoPilot was mentioned earlier this week of sifting through our confidential emails. I can drop the ‘alleged’ as Microsoft admitted to this and basically said ‘Oops’ as an implied reason. So what gives?

It starts with “So many ills can be laid at its door: social media harms, misinformation, polarisation, mining and misuse of personal data, environmental negligence, tax avoidance, the list goes on. Added to which, Silicon Valley’s leaders seem all too keen to cosy up to the Trump administration, to shower the president with bribes – sorry, gifts – and remain silent about his worsening political overreach. And that’s before we get to the rampant “enshittification”, as the tech writer Cory Doctorow describes it, which means that by design many big tech products have become less useful and more extractive than they were when we originally signed up to them.” OK, I can go along with this. And the sentence “many big tech products have become less useful and more extractive than they were when we originally signed up to them” gets a mention from me because some of these ‘culprits’ seemingly have no idea what innovation is, for the you have to look towards China, specifically Huawei and Tencent. So we get to the first hurdle.

“Google has cornered 90% of the search market for the past decade, but it is often no better, and sometimes demonstrably worse than its rivals, perhaps on purpose – Doctorow has called Google: “the poster-child for enshittification” citing its alleged strategy of worsening search quality so that users spend more time on the site. But changing the default search engine on any device is extremely easy. I’ve been using Ecosia for years. Instead of using your searches to fill corporate coffers, it uses them to plant trees. The Berlin-based company claims to have planted nearly 250m trees since it launched in 2009 (you can even get your own personal counter to feel extra virtuous). Ecosia commits 100% of its profits to climate action (over €100m so far), produces more clean energy than it consumes via its own solar plants, and collects minimal data on its users. Ecosia’s search results are not always as thorough as Google, admittedly (in the “news” category, for example), though the toolbar does give you options to search via Google and Bing if you need to.” The issue is that Ecosia is for all intent and matters Microsoft Bing. So this is seemingly a sales talk by a journalist because there is a massive problem finding anything by Microsoft reliable. And then we get the real stuff, Microsoft knows it is in hot waters, so we are given “The French company Qwant is similarly privacy-oriented (its slogan is “The search engine that values you as a user, not as a product”) and is now mostly independent (having started out based on Bing). It is now partnering with Ecosia to build a new “European search index”.” Yes but Microsoft is American ands as such your data will be copied and frowned on, browsed through to all their hearts content. If this is wrong, Ecosia and Qwant better clearly state that they are independent of Microsoft, because it is still the issue in Europe and for what they state the their DATA is completely secure, the issue becomes where are the backups? If they are on an American cloud or server, the setting of privacy is set to 0%.

I can agree with the Browser chapter and even as I still rely on Google (it has never failed me), I get that no everyone is in that chapter of things. I get the Office part. I myself downloaded LibreOffice (download only, no installation yet) and I will look at it at some point, the Apple apps do their work brilliantly. So we are given “Many of them, including Austria’s military and local governments in Germany and France, are switching to LibreOffice, created by the Berlin-based, nonprofit, The Document Foundation. Businesses and individuals are doing the same. Ethical Consumer has used LibreOffice for some time, says Fraser. “It’s an open-source version of Word, and all of the Office tools. It works and looks basically the same.”” I personally reckon that this is the problem Microsoft has and getting the data from Ecosia might be their last handhold to European data, this is not a given, but I expect that this is the inside not Europe to some degree. And whilst everyone is concerned with the privacy of data, I reckon that similar to the setting of 1998-2002, no one is digging and questioning the stages of backups. But that might merely be me and as I am no longer living in Europe, I casually don’t care.

Then we see the mobile settings with a shoutout to Fairphone in the Netherlands. I have nothing against Fairphone, but it always makes me wonder if Fairphone had the same idea that Tulip had in the 90’s. That doesn’t make it wrong, it is merely a Business Ploy that should be considered. I am now and always have been a Google guy. So when we see “There is a catch: most of these phones still rely on Google’s Android operating system, but any phone can be fully “de-Googled” with the /e/OS operating system (it comes as standard with Murena phones), developed by the global, mostly European, nonprofit, e Foundation.” I can think of a way where Google can set this with their Pixels. When the consumer can select Google or A Linux version that does most of the stuff, Google clearly wins in several chapters. I reckon that these flower can merely snap market share because of this, when Google leaves it to the consumers, Google wins nearly automatically. Oh and in all this there is no mention of HarmonyOS in this and I reckon that these smaller players are adjusting to HarmonyOS as we speak, or cater to, or appease that branch. Not everyone in Europe is ‘China hating’ material. And that is merely the smallest setting of these parts. I am personally not touching the shopping side. I was raised as a follower of ‘Support your local hooker’ a phrase from the late 70’s. In that age we got malls, supermarkets and such and die to that escalation loads of local stores went through a foreclosure setting. In that same way I don’t order from Amazon. I have nothing against Amazon and they closed the gap of rural places having no way to get stuff to them having plenty of stuff and over 60% or Europe and 71% of rural USA is now served. As such Amazon did them right. I just believe that I should get to the local stores to get what I need. I only had to resort to Amazon twice in the last 10 years. So I am happy. And all these Amazon haters can go sit in a corner trying to work out the function of a cheese slicer (revelation: the red corners that are diminishing have figured it out).

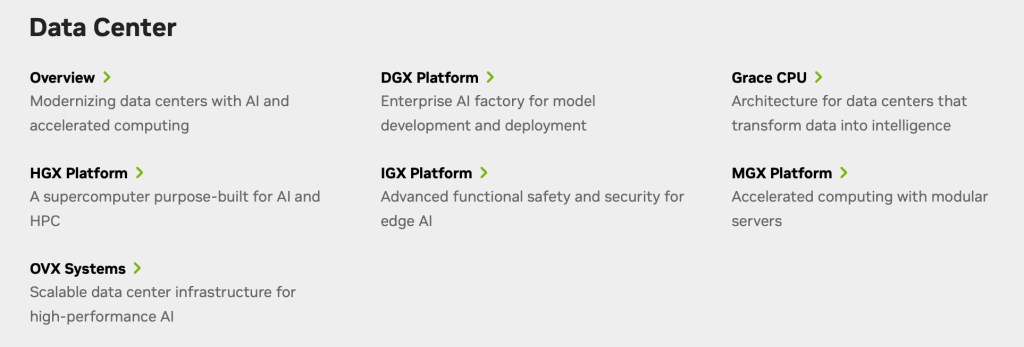

But my issue is that Microsoft is shown in a ‘favorable’ light, they aren’t and they aren’t due that setting as I personally see it. The fear behind this is not the Big-tech, it is the policy that comes through the CLOUD Act (2018), it gives America too much ability to get to out data and in several cases non-American IP, which is even more frightfully. these hundreds of data centers have no reason to exist if the CLOUD Act (2018) what made illegal, that is how I see it and there is no saving Microsoft, because we get ‘blunder’ after ‘blunder’ and how long until we get another ‘Oops’ setting but now corporate IP was set in some AI hole? That is the larger fear that I see and there is no stopping it, whilst corporations are breathing the AI cloud through wannabe’s who want to move up in the world, that data is most likely to get compromised and as corporations are not setting the HR and data loops to any scrutiny, this is likely already happening and will continue to happen until the then valueless corporations see that they had to act a lot sooner than the day before all their data is in other hands. We already have Thomson Reuters v. ROSS Intelligence (2025), Bartz v. Anthropic (2025/2026), Disney & NBCUniversal v. Midjourney and the best case is United States v. Heppner (2026) where we see that documents drafted using a public, consumer-grade AI tool were not protected by attorney-client privilege or the work product doctrine. And that is the setting that people miss. Should someone at IBM use that setting this work becomes public, so consider that this is not IBM, but Microsoft using Copilot or OpenAI (ChatGPT) the work of your corporation becomes for all intent and purposes Public Domain, did you sign up for that?

There is plenty in the article that makes sense, but the ones that aren’t mentions are a larger fear creator than anything you are trying to hide from. Just an idea to consider. Have a great day this day.