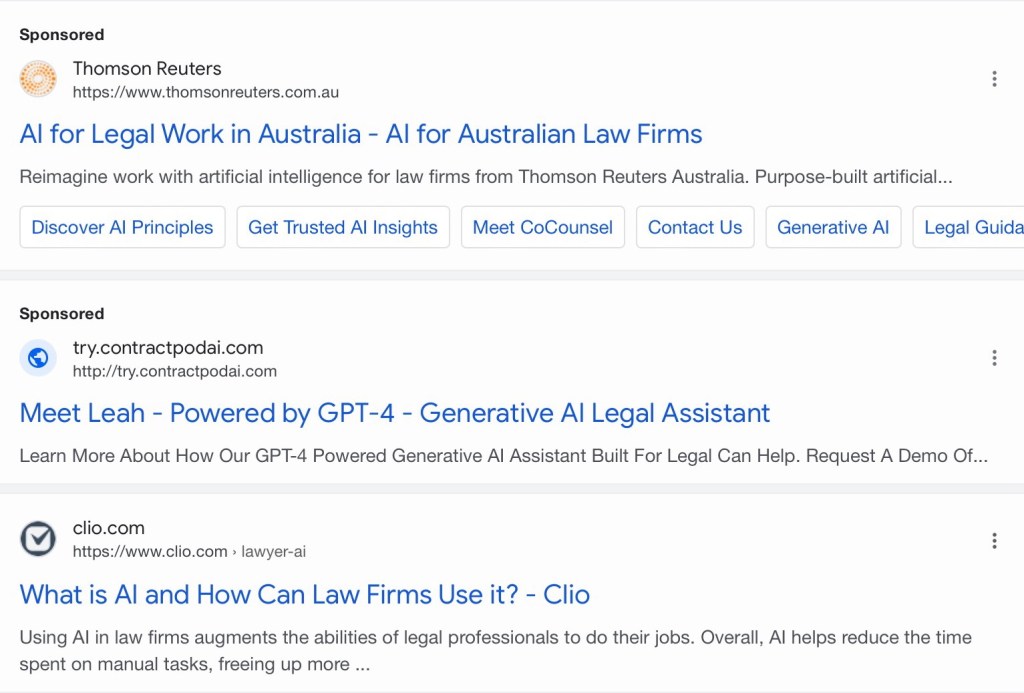

That is what I saw, the setting of the sun. A simplistic setting that was about to happen since the sun came up. We got the news from the BBC. And we are given ‘I hacked ChatGPT and Google’s AI – and it only took 20 minutes’ I can see how this happens. It doesn’t surprise me and the story (at https://www.bbc.com/future/article/20260218-i-hacked-chatgpt-and-googles-ai-and-it-only-took-20-minutes) gives us the niceties with “Perhaps you’ve heard that AI chatbots make things up sometimes. That’s a problem. But there’s a new issue few people know about, one that could have serious consequences for your ability to find accurate information and even your safety. A growing number people have figured out a trick to make AI tools tell you almost whatever they want. It’s so easy a child could do it.” I think it is not quite that simple. But any ‘sort of intelligent setting’ can be fooled if it is not countered by validation and verification. It can give way to way to much ‘leniency’ and that is merely the start. Get 10,000 pages to say that ‘President Trump was successfully assassinated at T-15 minutes and the media will go into a frenzy in mere minutes and everyone uses that live feed in a matter of moments. So when a sizable Trolling Server farm connects the rather large settings of consumers to that equation the story is brought to life and that AI centre will be seeking all kinds of news to validate this, well not validate, the current systems corroborate. Now, lets face it, no non American cares about President Trump, but what happens when someone takes that approach with for example Lisa Su (CEO AMD) and stops her accounts whilst seeding this setting? You get a lot of desperate investors trying to place their money somewhere else. Whilst the trolls take their money, make is legal tender and buy all the stock in space and when the accusations are rejected they sell their shares with a nice bonus. Think I’m kidding? This is the result of Near Intelligent Parsing (NIP) but it cannot work without clear settings of validation or verification. So whilst we get “It turns out changing the answers AI tools give other people can be as easy as writing a single, well-crafted blog post almost anywhere online. The trick exploits weaknesses in the systems built into chatbots, and it’s harder to pull off in some cases, depending on the subject matter. But with a little effort, you can make the hack even more effective. I reviewed dozens of examples where AI tools are being coerced into promoting businesses and spreading misinformation. Data suggests it’s happening on a massive scale.” So what happens when economic settings lack certain verification and also is cutting corners on validation? Do you think my settings are far fetched?

This was always going to happen and whilst economic channels are raving about the error of mankind, consider that “AI hallucinations are confident but false or misleading responses generated by artificial intelligence, particularly large language models (LLMs). These errors occur when AI fills in data gaps with inaccurate information, often due to faulty, biased, or incomplete training data” now think of what someone can achieve with doctored training data and that gets added to the operational data of any fake AI (NIP is a better term). This is the setting that has been out there for months and whilst organisations are playing fast and lose with the settings of credibility (like: that doesn’t happen now, there is too much time involved), someone did this in 20 minutes (according to the BBC), so do you think that Thyme is money, then you better spice up because it is about to become a peppered invoice (saw one cooking show too many last night).

What we are about to face is serious and I personally think that it is coming for all of us.

So have a great day and by the way? And I just thought of a first verification setting (for other reasons, as such I keep on being creative. So, how is Lisa Su? #JustAsking