The path we make is often set, for one, you cannot walk the path of (fake) AI without considering the side-roads called Data Verification and Data Validation. They are intertwined. And whenever I get to Data Validation, NASA tends to be own my mind. They have been on the Data Validation path as early as the 70’s, long before whomever runs IBM/Microsoft/Google now, they were already looking at ways to support their validation tracks. So when I see the combination of NASA and DATA I tend to look up and take notice. So when we get ‘NASA POWER’s PRUVE Tool Streamlines Data Validation’ (at https://www.earthdata.nasa.gov/news/blog/nasa-powers-pruve-tool-streamlines-data-validation) where we see “NASA’s archive of Earth observation and modeling datasets has an incredibly diverse range of uses, and assessing data uncertainty is a critical step toward ensuring the data and analyses are accurate, reliable, and trustworthy. Several factors, such as instrument calibration, atmospheric corrections, and land-surface albedo, can affect the quality of satellite data. For users working with solar and meteorological datasets, quantifying uncertainty is especially critical, as these data often inform decisions and policymaking at the community level.” And this introduction leads towards the two quotes “NASA’s Prediction of Worldwide Energy Resources (POWER) project, which provides datasets from NASA in support of energy, buildings, and agroclimatology decisions, developed a tool that enables users to assess data uncertainty for selected surface variables from POWER’s data catalog with corresponding surface measurements.” And “The cloud-based tool — the PaRameter Uncertainty ViEwer (PRUVE) — makes assessing data uncertainty more straightforward for users across disciplines and skill levels. PRUVE uses surface observed site meteorological data from the National Oceanic and Atmospheric Administration (NOAA) and surface radiation data from Baseline Surface Radiation Network (BSRN) to compare against POWER-provided surface meteorological and radiation data values. This user-friendly application gives users an opportunity to quickly confirm data validation through customizable queries.”

So when we see “By creating the free, easy-to-use PRUVE tool, the POWER team instills an additional layer of trust, empowering users to tackle some of the most important long-term weather challenges facing our planet.” I feel doubt and I do know that this is in me, not because of what is promised, but consider the settings in the example we see “a student wanting to install a small wind turbine for a study project at their college. They are limited by size and cost, so they need to make sure the predictions and analyses are reliable. As part of the study, they can use wind and other historical data parameters available through POWER to forecast how much energy will be produced from the wind turbine system. The student wants to limit the level of uncertainty in their prediction calculations as much as possible.” All whilst we also see:

- No-coding access.

- More than 3,000 surface sites.

- Dynamic data visualization available for each site.

- Ability to create maps and plots and to conduct spatial analysis on the fly.

- User-selectable point-based descriptive statistics.

- User-selectable site-based intercomparisons.

- Advanced custom plotting for specific uses.

So where is the doubt? You see for the most there is no doubt in the powers that ‘reside’ within NASA, but when you see these facts, why this system is not ‘coexisting’ in the Google, IBM or Microsoft clouds? This system should (read: optionally could) be adjustable to these fake AI systems to smooth over validation and reduce error in whatever data there is. And I do know that it is not that simple, but consider the settings that are lacking now, the transference of these options might also fill the coffers of NASA and there is no way they don’t need that. And as my skeptical self realizes nearly all the data systems on the planet require additional layers of trust, but that might merely be me.

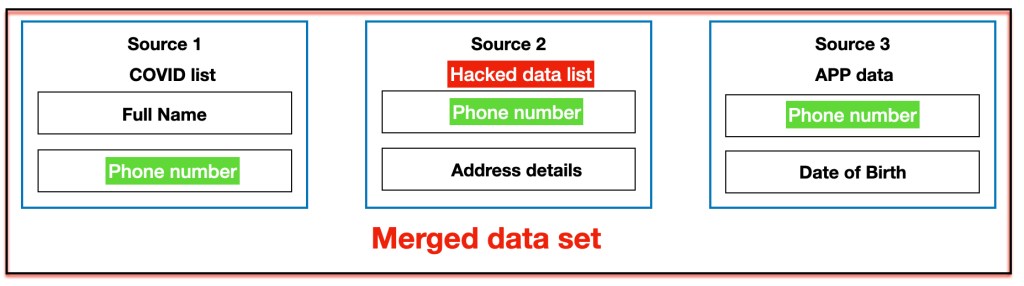

So as I see it, nearly all data systems are set towards some setting that there is some side solution towards data validity, all whilst there is a direct need to make checking the validity of data a main priority. So what happens when this solution gets additional layers of data validation, in part in statistics to see if the validation sets statistical boundaries whether the data set in some normal way, but that limits the setting is an outlier is found, so how can that be validated? Then there are multiple factors where a value should behave in certain ways, but it would not be easy. I reckon that NASA could pull it off and it would be a tool that everyone needs. I merely wonder why no-one has considered it before. Now, I do understand that it is a tall order and I might be incorrect (read: full of it) but consider how meteorological numbers are achieved, consider that there will be error, but a setting that reduces error in validation. A system that reiterates the data given and considers whether validation passes of fails. A system like that could be made, but the issue are the outliers, so what makes an outlier valid, because if one outlier is wrongfully ‘deleted’ the data set could become invalid. So is this possible? I think that only NASA with its expertise could make such a system a reality, making data validation more readily available. Because no matter what verification process follows and whilst we await the coming of real AI, validation will still be a setting that is required in whatever data system comes to the surface of true AI. And perhaps the system will become a verification setting, both are required and neither system seems to be ‘correctly’ developed at present. It is a horrible conundrum, but it requires contemplating as such a system is needed by the time Real AI comes to all our doorsteps.

The additional issues I see is that in this case the PRUVE tool has all these connecting data segments, but what happens when it is a little more complex? We have all our minds set to ‘connected’ data, but it isn’t that simple at times. Consider the ludicrous setting of length and shoe size. Now we can understand the setting of a 4’8” person with 17” shoes (he wishes), but is it out of the realm of possibilities? There is a girl named Shae, who claims she knows one person with that description (Game of Thrones joke). So how would you be able to validate this? Perhaps other data is required to make the clear distinction valid and how could such a system make validation reliable? As I see it, the biggest problem into validating data is being able to recognise the outliers. I see the deletion of outliers as a problem, the data loses reliability and verification become next to impossible. Its like watching a dataset limited without data from the Interquartile Range (or 3-Sigma Rule) and as I see it, whatever data you remain with makes actions like fraud detection close to impossible (unless that transgressor is extraordinary stupid). You see there is the ‘old’ premise that “Outliers can bias statistical estimates, causing inaccurate results in predictive models or misrepresentations in descriptive statistics.” I am not saying it is incorrect, but the absence of outliers could make the validity of that data a lot more dubious and finding this is a real challenge, so as far as I see it, That is a job for NASA (the keyword Superman was already taken by DC comics).

So see this as a little trip on the brainstorming front, I definitely need a hobby and I am all out of licorice.