That is the question I had today/this morning. You see, I saw a few things happen/unfold and it made me think on several other settings. To get there, let me take you through the settings I already knew. The first cog in this machine is American tourism. The ‘setting’ is that THEY (whoever they are) expect a $12.5 billion loss. The data from a few sources already give a multitude of that, the airports, the BNB industry and several other retail settings. Some give others the losses of 12 airports which goes far beyond the $12.5 billion and as I saw it that part is a mere $30-$45 billion, its hard to be more precise when you do not have access to the raw numbers. But in a chain trend Airfares, visas, BNB/hotels, snacks/diversities, staff incomes I got to $80-$135 billion and I think that I was being kind to the situation as I took merely the most conservative numbers, as such the damage could be decently more.

This is merely the first cog. Second is the Canadian setting of fighters. They have set their minds on the Saab Gripen s such I thought they came for

Silly me, Gripen means Griffin and a Hogwarts professor was eager to assist me in this matter, it was apparently

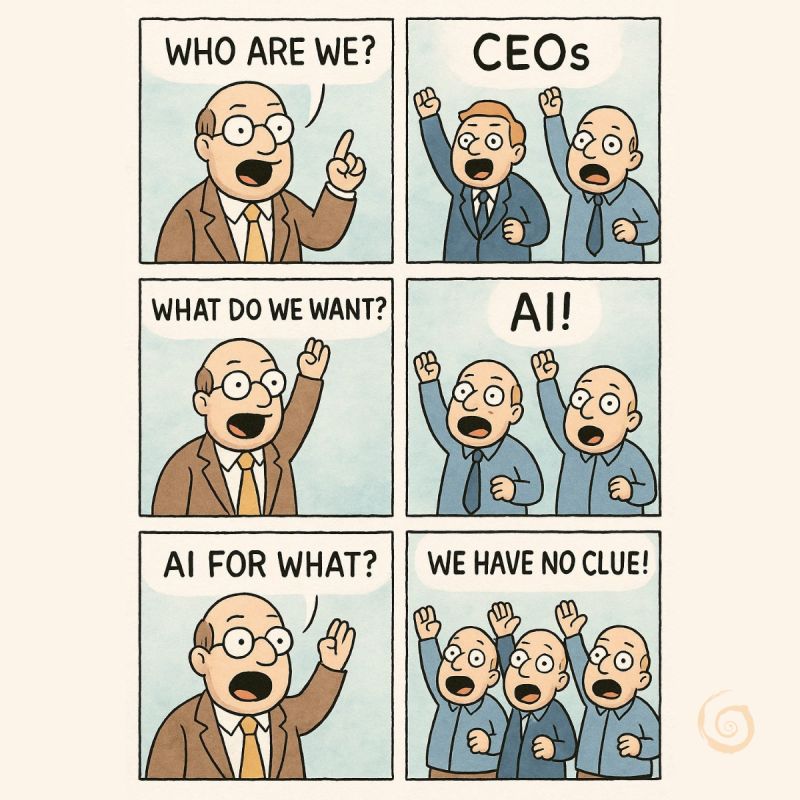

Although I have no idea how it can hide that proud flag in the clouds. What does matter that it comes with “SAAB President and CEO Micael Johansson told CTV News that the offer is on the table and Ottawa might see a boost in economic development with the added positions. The deal could be more than just parts and components; Canada may even get the go-ahead to assemble the entire Gripen on its soil.” (Initial source: CTV news) this brings close to 10,000 jobs (which was given by another source) but what non-Canadian people ‘ignore’ is that this will cost the American defense industry billions and when these puppies (that what they call little Griffins) are built in Canada, more orders will follow costing the American Defense industry a lot more. So whilst some sources say that “American tourism is predicted to start a full recovery in 2029” I think that they are overly confident that the mess this administration is making is solved by then. I think that with Vision 2030 and a few others, recovery is unlikely before 2032. And when you consider The news (at https://www.thetravel.com/fifa-world-cup-2026-usa-tourist-visa-integrity-fee-100-day-wait-time-warning-us-consul-general/) by Travel dot com, giving us ‘FIFA World Cup 2026 Travelers Warned Of $435 Fee And 100-Day Delay By U.S. Consul General’ that there is every chance that FIFA will pull the 2026 setting from America and it is my speculation that Yalla Vamos 2030 might be hosting the 2026 and leave 2030 to whomever comes next, which is Saudi Arabia, the initial thought is that they might not be ready at that time, but that is mere speculation from me and there is a chance (a small one) that Canada could step in and do the hosting in Vancouver, Toronto and Ottawa, but that would be called ‘smirking speculation’ But the setting behind these settings is that Tourism will likely collapse in America and at that point the Banks of Wall Street will cancel the Credit Cards of America for a really long time and that will set in motion a lot of cascading events all at the same time. Now if you would voice that this would never Tom’s Hardware gave us last week ‘Sam Altman backs away from OpenAI’s statements about possible U.S. gov’t AI industry bailouts — company continues to lobby for financial support from the industry’ If his AI is so spectastic (a combination of Fantastic and Spectacular) why does he need a bailout? And when we consider this. Microsoft once gave the AI builder a value of a billion dollars and they blew that in under a year on over 600 engineers. So why didn’t Microsoft see that? 600 engineers leave a digital footprint and they have licensed software. Microsoft didn’t catch on? And as we see the ‘unification’ of Microsoft and OpenAI have a connection. Microsoft has an investment in the OpenAI Group PBC valued at approximately $135 billion, representing a 27% stake. So there is a need to ask questions and when that bubble goes, America gets to bail that Windows 3.1 vendor out.

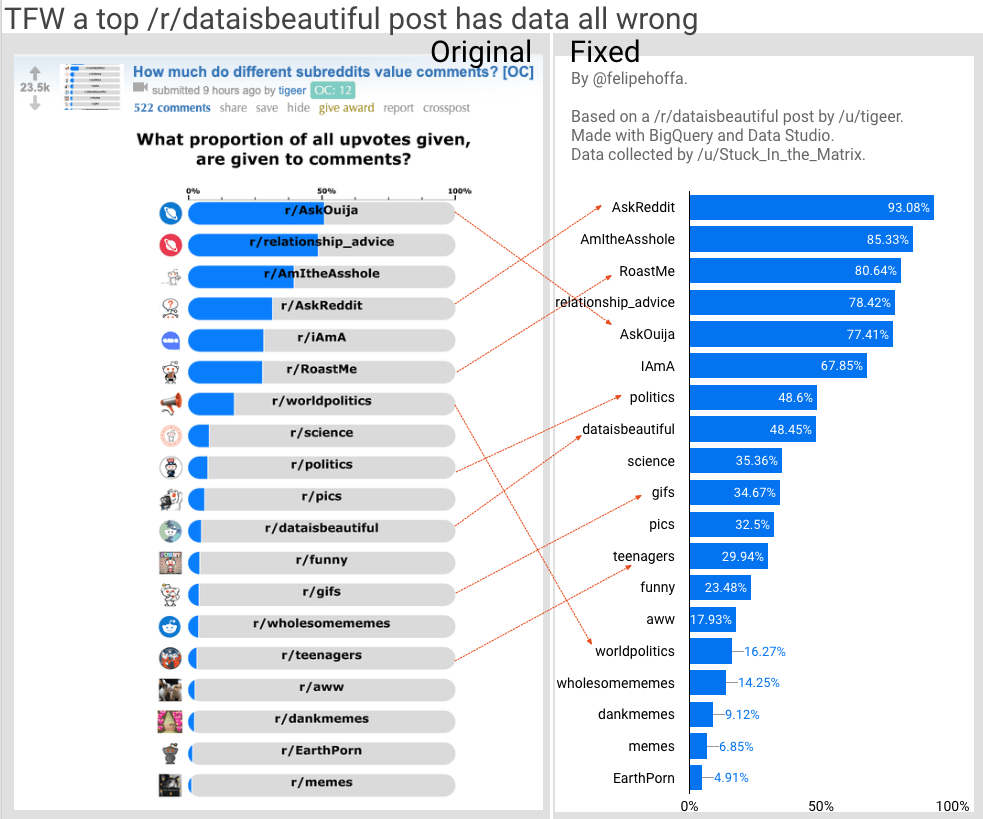

As I see it, don’t ever put all your eggs in one basket and at this point America has all the eggs of its ‘kingdom’ in one plastic bag and it reckon that bag is showing rips and soon enough the eggs fall away into an abyss where Microsoft can’t get to it. The resources will flee to Google, IBM, Amazon and a few other places and it is the other places that will reap havoc on the American economy. So when the tally is made, America has a real problem and this administration called the storm over its own head and I am not alone feeling this way. When you consider the validation and verification of data, pretty much the first step in data related systems you can see that things do not add up and it will not take long for others to see that too. And in part the others will want to prove that THEIR data is sweet and the way they do that is to ask questions of the data of others. A tell tale sign that the bubble is about to implode and at present it is given at ‘Global AI spend to total US$1.5 trillion’ (source: ARNnet) but that puppy has been blown up to a lot more as the speculators that they have a great dane, so when that bubble implodes it will cost a whole lot of people a lot of money. I reckon that it will take until 2026/2027 to hit the walls. Even as Forbes gave us less than 24 hours ago ‘OpenAI Just Issued An AI Risk Warning. Your Job Could Be Impacted’ and they talk about ASI (too many now know that AI doesn’t exist) where we see “Superintelligence is also referred to as ASI (artificial superintelligence) which varies slightly from AGI (artificial general intelligence) in that it’s all about machines being able to exceed even the most advanced and highly gifted cognitive abilities, according to IBM.” And we also get “OpenAI acknowledges the potential dangers associated with advancing AI to this level, and they continue by making it clear what can be anticipated and what will be needed for this experiment to be a safe success” so these statements, now consider the simple facts of Data Verification and Data Validation, when these parts are missing any ‘super intelligence’ merely comes across as the village idiot. I can already see the Microsoft Copilot advertisement “We now offer the copilot with everyones favourite son, the village idiot Clippy II” (OK, I am being mean, I loved my clippy in the Office 95 days) but I reckon you are now getting clued in to the disaster that is coming?

It isn’t merely the AI bubble, or the American economy, or any of these related settings. It is that they are happening almost at the same time, so a nasdaq screen where all the firms are shown in deep red showing a $10 trillion write-off is not out of the blue. That setting better be clear to anyone out there. This is merely my point of view and I might be wrong to read the data as it is, but I am not alone and more people are seeing the fringe of the speculative gold stream showing it Pyrite origins. Have a great day it is another 2 hours before Vancouver joins us on this Monday. Time for me to consider a nice cup of coffee (my personal drug of choice).