I was contemplating the issues of Data privacy and particular the issues around US customs and their intrusion on your data issues. I had a few issues with that and as America is now the least reliable side of the matter I decided on a few techniques that might allow evasion of this. This morning I decided to look a few things up and I paused at Wired (at https://www.wired.com/2017/02/guide-getting-past-customs-digital-privacy-intact/) and I got to ‘How to Enter the US With Your Digital Privacy Intact’ where my suspicions were greeted with the ideas that had not been thought of. You see I am a great fan of ‘non-repudiation’ and that gave me the idea. What if you had the greatest of data insights? What if part of this locking and unlocking the data is for example your library card? This gave me two settings. The first is the magnetic strip, you see, you never think of this and it is what YOU make of it. The first setting is that a bank card has three tracks on a magnetic strip and they are for the most employed by banks when they need it (like ATM), but that setting could be altered for YOUR needs. The second part is what the card looks like. We can use these two elements to take a new page out of a book.

So this leaves us the corporate way and the personal way.

As a first, we get to copy the details you need (like a contact list, app list and personal lists). The second part becomes copying hat you need to a corporate server, encrypted data that is merely there, like a backup. So how is that dat secure? Well we get to the next stage, we take one or two cards you have on you. One with a magnetic strip, one as a card (could be business card, could be staff access card, or even your library card). You will keep it on you at all time. And third a personal access number (up to 12 digits) This gives you the setting of non-repudiation.

Now we travel to a ‘no one cares where’ place in America and you pass through customs, without phones or laptops. Just a regular joey. And in the American office you go to the security office and download the essentials. Now this merely makes sense for the people who needs this. So it is not for everyone in the first stage.

You pass the credits to a scanner and there is your data, your essential data that is. Kept safe from peeking eyes, and there is a growing concern that this is becoming more and more essential. We seemingly are ‘held’ to the dangers of YOUR data, but I reckon that America is now gaining an essential need of Digital IP that they can ‘embrace’ for their broke settings soon enough. Only for you to lose the fact that your IP was hijacked and no one knows who or where. But that is the setting that I am seeing now. They need IP to survive the next year and why should they be allowed your data? At present we see nearly everyone giving us “Chinese theft of American IP currently costs between $225 billion and $600 billion annually.” But I am not so sure. We get the ‘victims’ that Nokia and other brands, all whilst Huawei is far beyond what players like Nokia and others can produce. Is there IP theft? Yes, I know there is but from fashion brands like Gucci (it might be IP brands) but the markets are making a killing on $15000 Gucci bags, now for sale in the markets at $179 dollars. As I see it, the new settings allows for America to steal what they need to avoid having to not pay their interest bills. Now this is allegedly, I have no evidence. But the setting as I see it is quite real, as such I devised a way to avoid becoming a victim. The best option is to avoid America all together. Possible for me, but not for everyone and should I get that decently paying technical support job, then I will end up working for a US firm (hopefully avoiding the US altogether) but I am not holding my breath on that.

As such I came up with this, a first in this task. There are two settings. The first is the data and the second is the hardware. The data I describes and I am a firm believer in non-repudiation. The hardware is different. You se, the movies have this nice clean crisp solution, but we are barely there. There was Ultraviolet (2006) where we see a foam phone printed and folded. We are already at that stage where we can do that. The printed foam cover is possible, there is still the setting of the battery, but that could be overcome. We merely set the LCD print board to include the display, you won’t have a camera setting, but that wouldn’t be needed. We get the setting that the devices go back to their original platform. So you have (if needed) a camera, a battery, and whatever more you need. The printed phone will interact with it all if needed. And wouldn’t it be nice if Huawei gives you all that? American stupidity forces China to give us the next need to innovate. That is irony the size of the Titanic (in action).

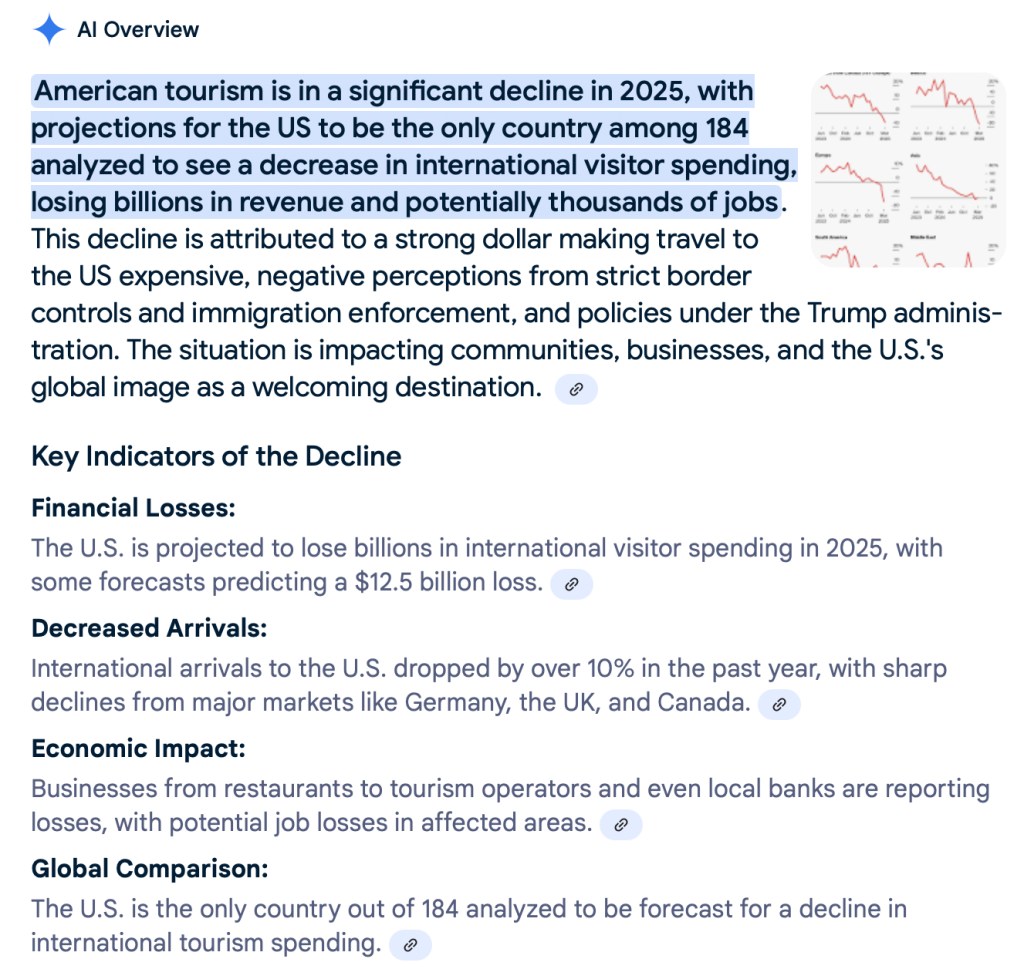

You get one republican idiot forcing the world to turn to its life long enemy (President Nixon doesn’t agree with this statement), but that is for tomorrow. There is of course the real setting. Do we still need America? They are so in denial about what is real that the current tourism news is given to you by YouTube (optionally TikTok too).

As such my mind went wandering into the data safety setting and as the article is giving you, others have preceded me. But for now, corporations will need to adapt that same policy before they lose the data they have and personal data is currency, one that America shouldn’t possess. As such I wonder at what point these firms will avoid America altogether, setting offices up in the UAE and Saudi Arabia. And now that it seems that India is turning to Russia and China for their oil, they are likely the first to change venue towards their BRICS partners. The EU and the Commonwealth are next. As such Canada, Australia and the United Kingdom will result into making these jumps, to what extent is impossible to predict. I reckon that it depends on how they are depending of America as such. It will be a fluctuating field. But what is true is that more and more people are seeing the hardships that American corporations faces. GM has shed nearly 20,000 staff from 2018 onwards. ‘Tesla to cut 14,000 jobs as Elon Musk aims to make carmaker ‘lean and hungry’’ and that is merely in the last year. In the last 2 months we were told that Microsoft is shedding 9000 jobs. That’s over 40,000 people in merely three corporations and when we seek harder answers. Only Yesterday did Fortune give us ‘Ray Dalio says ‘most people are silent’ because they’re afraid to talk about what’s really happening with the U.S. economy’, I saw this setting months ago and the media is avoiding the issues as they are allegedly being held hostage by advertisement revenues. We aren’t given the real deals and I am not sure where the real deal stands. According to the media the setting is ‘US economy has likely stalled, with 50% risk of recession in 2 years, says Barclays’ in the meantime we are also given ‘US Economy: Jobless Claims Rise, Trade Gap Widens’ and ‘Stagflation & Recession Risks Loom Large Over US Economy’ with sources like UBS (allegedly relying on hard data), UBS gives us a 93% recession risk. If this is true, how does the Barclay setting make sense? I get it, talking about issues in two years time doesn’t mean that the risk is low in the next few months (it could be 100% by November). UBS gave three red flags, so there are all indicators. And the setting of Stagflation becomes the ‘norm’ Which gives us that growth is slowing, but the prices are rising. I am merely voicing what others are saying as I am not an economist. I reckon this is the second bullet that Canada is seemingly dodging as they elected Mark Carney (formerly Marky Mark of the British Bank). I’ll take his word over President Trump’s claims any day of the week. Moody’s speaker Mark Zandi gives us “we aren’t in a recession, but on the precipice of this recession”, OK, I am willing to go along with that, but merely as it seems sincere and I have no economic degree (Mark Zandi apparently has a stack of them). The problem is that these two sources highlight a rather large issue and the media is skating around them, they are avoiding the issue to get their alleged hands on advertisement revenue. It becomes an issue to see the real data and that is where you want to pass your IP through the borders? Not in my lifetime. I am likely to get a nice bonus if I just hand my IP to China, which sounds a lot more promising than trusting that America will do right by me. According to Zandi a third of America is already in recession or close to it and when we add the Tourism numbers I am seeing a grim picture, one that makes me plan my next vacation (whenever I can afford that one) on Yas Island in Abu Dhabi, UAE and not in America (ever). The Bank of America is blaming this on Tariffs (what a surprise). As such you might wander what one thing has to do with the other. The principle we are currently seeing at the America borders is the identification of HVT’s (High Value Targets), the second setting is IP. America needs trillions and one way to get these is by hijacking IP (making America the sole distributor of YOUR IP) Is that rally the way to go? Why don’t we ask the EU, Commonwealth and China on that issue? I think this is the one case where these three sides will speak (agree) in unison and I saw the setting coming over a decade ago and it is all over my blog. So why wasn’t the media this informative? I will let you decide.

But believe me that your IP and your personal DATA require protection and in a non-repudiating way. As such my mind went tinkering to what is possible and securing and keeping your data online was a first stop. I call it alternative way and that has a way of becoming the only or main way soon enough.

Have a great day, I’m now a mere 90 minutes from breakfast.